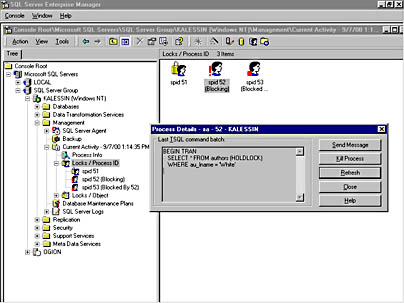

Some of the types are very simple with just a one column (as the one shown in the question), some of them contains 10+ columns of various types. It uses several different User-Defined Table Types as table variables. There is a quite complex application code running as a single database transaction. Thanks Erik Darling for a hint.įirst, I will try to clarify a bit the entire application process to give you the context. NOT NULL, PRIMARY KEY CLUSTERED ( ASC) WITH (IGNORE_DUP_KEY = OFF)Īny idea what could cause these strange deadlocks and how to bypass them?įinally, we have just made a breakthrough with our issue. The table type definition is as follows: CREATE TYPE. INNER JOIN ids ON ids.Id = t.HistoricalCompanyInfoId

Some time ago we started getting a wired deadlocks whereby a single process deadlocks itself on access to a table variable.Įxample Deadlock Report READONLY)ĭELETE. But be aware that if you're not in READ COMMITTED SNAPSHOT mode that will block all reads to the table, and in any case it will block other non-bulk writes.We are running SQL Server 2019 CU12 for one of our customers. Or since the target table is not a heap, you should get exclusive access with SqlBulkCopyOptions.TableLock.

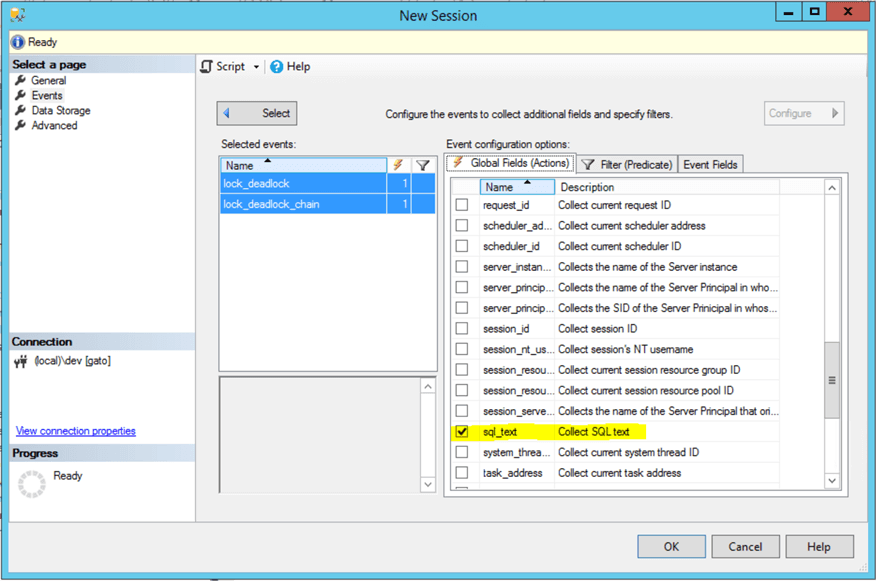

Var pLockMode = (new SqlDbType.VarChar, 32)) Var pResource = (new SqlDbType.NVarChar, 255)) Var cmd = new SqlCommand("sp_getapplock", tran.Connection) Ĭmd.CommandType = CommandType.StoredProcedure Since all your new rows have to go at the end of the index, the loads can't really run in parallel to begin with.īefore calling SqlBulkCopy, run this on your SqlTransaction: static void GetAppLock(string name, SqlTransaction tran) This will force your bulk load sessions to serialize, and load one-at-a-time without relying on the normal row/page locking to do this. And they best way to handle explicit locking in SQL Server is with sp_getapplock. But like a lot of deadlock scenarios it's not necessary to fully understand them to remediate them.Ī deadlock is caused by locking to little, and too late, and they can typically be resolved by forcing an earlier and more exclusive lock. I don't know why this is deadlocking, and I can't reproduce it. )WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, DROP_EXISTING = OFF, ONLINE = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON To be honest, I don't know enough to know if I should alter that.Īnd here is the non-clustered index that's also on the table: CREATE NONCLUSTERED INDEX ON. ADD CONSTRAINT DEFAULT ('') FOR ĪLTER TABLE. ADD CONSTRAINT DEFAULT ((0)) FOR ĪLTER TABLE. ADD CONSTRAINT DEFAULT (getdate()) FOR ĪLTER TABLE. )WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON ĪLTER TABLE. (100) NOT NULL,ĬONSTRAINT PRIMARY KEY CLUSTERED Here is the table's schema, generated from a create script: SET ANSI_NULLS ON The number of rows can vary, but the high end might be something like 4,000. String destColumn = columnNames.Single(x => x.Equals(column.ColumnName, StringComparison.OrdinalIgnoreCase)) ī(column.ColumnName, destColumn) Īwait bulkCopy.WriteToServerAsync(dataTableWithEnumStrings) įrom what I can tell, it looks like a page lock. The column mappings are case sensitive, so grab the destination column so we can use its casing. Add column mappings so we don't have to worry about order when adding new columns/properties.įoreach (DataColumn column in dataTableWithEnumStrings.Columns) Var dataTableWithEnumStrings = ConvertDataTableEnum(dataTable) Var dataTable = ToDataTable(histories, columnNames) Here is the bulk copy code: using (SqlBulkCopy bulkCopy = new SqlBulkCopy(sqlConnection, SqlBulkCopyOptions.Default, sqlTransaction))īulkCopy.DestinationTableName = destinationTableName Both the victim and blockers show the same SQL statements.

This is the line of code that does the inserts: await bulkCopy.WriteToServerAsync(dataTableWithEnumStrings) Should it be possible for deadlocks to occur in this scenario? They are done within a transaction. And that table's PK is an identity column. The bulk inserts are inserting into the same table. But a colleague mentioned it shouldn't be possible with my setup. I think these simultaneous bulk inserts are the reason why the deadlock is occurring. The deadlock usually happens, but not always. Using a message bus, many subscribers can end up doing a bulk insert at roughly the same time. When analyzing a deadlock graph, it looks like the victim is a bulk insert, and the blockers are the same bulk insert.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed